MongoDB vs. Hadoop

The amount of internet information has risen dramatically in recent years at an increasing pace. Today many industries such as telecom, retail, social media, healthcare sector, and others are continually collecting and generating a tremendous number of zettabytes of user data. We plan to go over in detail the benefits and limitations of both MongoDB and Hadoop in this article.

The processing of this massive volume of data with traditional RDBMS systems is impossible and poses a huge data challenge. New and enhanced Bid Data solutions, to solve this problem, are designed to process a massive volume of unstructured data in a timely and cost-effective manner.

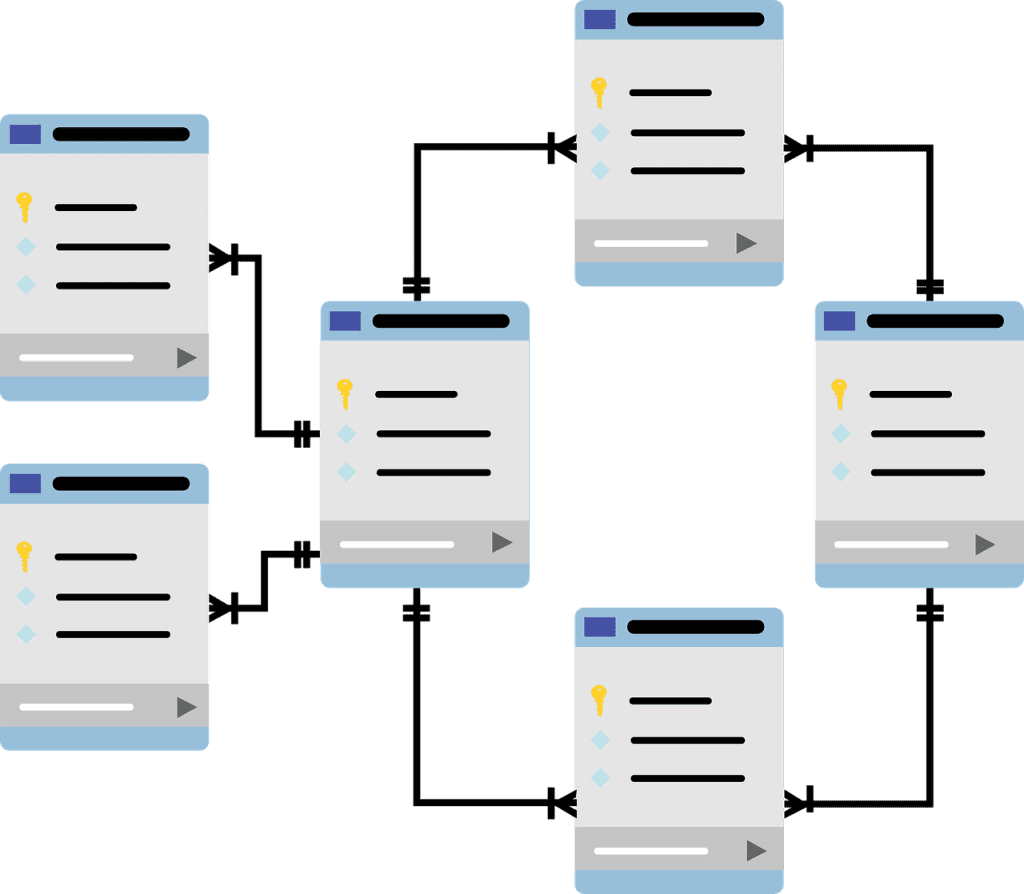

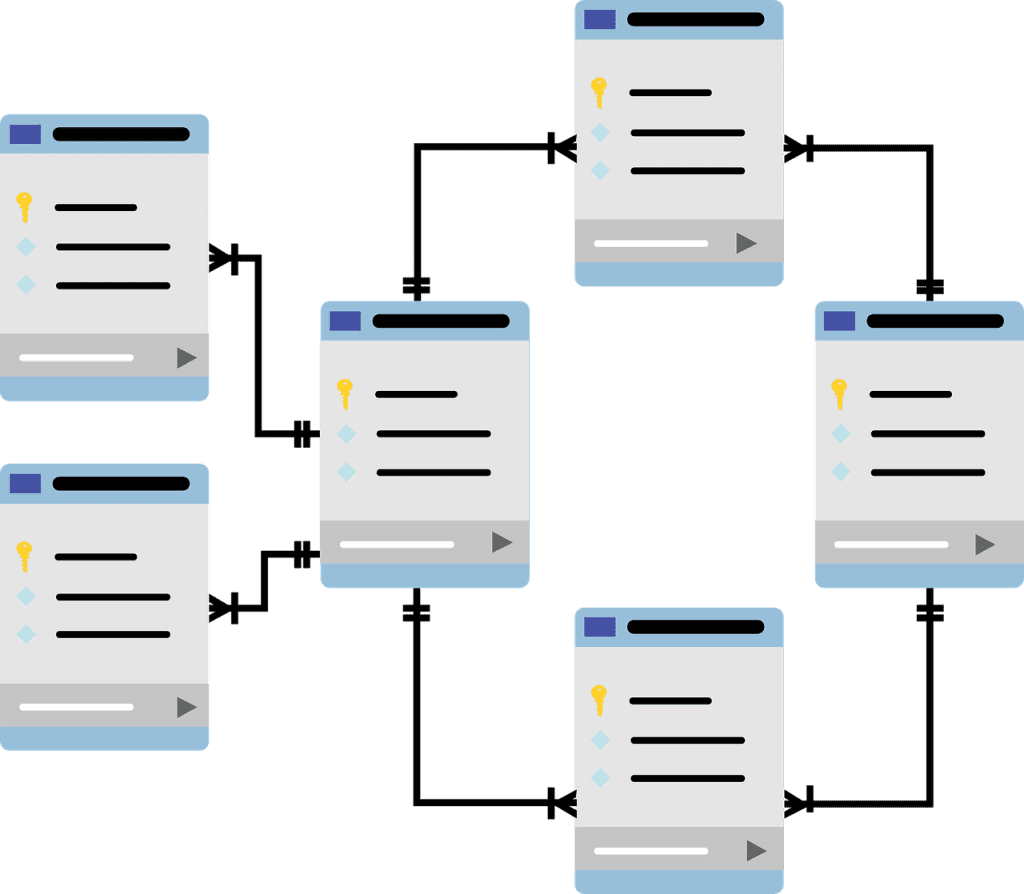

There are many solutions to deal with extensive unstructured data, but Hadoop and MongoDB managed to emerge as two popular choices for harnessing Big Data. In short, MongoDB refers to a NoSql database, whereas Hadoop refers to a framework. They both follow different approaches in storing and processing of massive volume of data.

What is MongoDB?

MongoDB is a flexible document-oriented cross-platform NoSQL distributed database program completely written in C++. It supports ad-hoc querying and horizontal scalability. MongoDB was developed in 2007 by a 10gen software company initially as a cloud-based app engine. Its initial goal was to run various software and services smoothly. The cloud-based app engine was called Babble, and the database is known as MongoDB.

As this idea did not go well, as planned, they launched MongoDB to the public as an open-source Big Data solution. It enhanced the existing RDBMS systems by adding a new variety of good use cases. As this is a document-oriented database system, users can query various data fields at once, unlike traditional RDBMS, which needs users to write multiple queries to fetch data from multiple tables in rows and columns.

What is Hadoop?

Apache Hadoop is not a single program, application, or database. It is an open-source Java-based software utility used to store, process, and manage Big data. Hadoop platform uses multiple computers to analyze and process a large volume of datasets in parallel more efficiently and quickly. The first release of Apache Hadoop was in 2006.

Apache Hadoop framework core components include Hadoop Common, HDFS (Hadoop Distributed File System), YARN (Yet Another Resource Negotiator), and MapReduce. Hadoop Common consists of various libraries and utilities used by other Hadoop modules.

HDFS is a distributed file system dealing with the standard storage part of the data on commodity machines. Hadoop HDFS divides the large data file into small pieces and stores them scattered across the cluster. Hadoop YARN acts as a resource manager handling several work resources present in the groups and uses these resources efficiently while scheduling users’ applications. Hadoop YARN also includes several other tools to process massive data.

Hadoop MapReduce takes care of large-scale data processing. MapReduce will fetch the data present over the cluster using distributed computing power, where several nodes work in parallel to complete the task provided efficiently.

Why Firms Need MongoDB and Hadoop Skilled Professionals?

As data is essential and critical for any organization, it is ideal for them to use MongoDB and Hadoop to handle Big Data. With their top-notch features and very few drawbacks, these applications can easily handle the massive volume of data.

Organizations can go with MongoDB if they want to handle a massive volume of real-time data or use Hadoop for data processing and providing large-scale batch solutions. They can combine these applications to address each other’s strengths and weaknesses based on the business environment.

Top Comparisons between MongoDB vs. Hadoop

Both Hadoop and MongoDB platforms have their advantages and disadvantages in terms of similarities and compatibility. Each of these platforms follows different approaches in processing, handling, and storing the data.

Similarities Between Hadoop and MongoDB:

Some common factors shared by Hadoop and MongoDB include Cross Platform OS support, handling a massive volume of data with ease, Map-Reduce, File System Storage, and Server-Side Scripting support.

Advantages of MongoDB:

● MongoDB is very cheap and cost-effective as it comes as a single product.

● MongoDB supports OLTP and is easy to set up compared to the Hadoop setup.

● MongoDB easily handles memory management for quickly processing real-time data analytics.

● Its strength lies in its robustness and is a far more flexible and ideal replacement for old existing RDBMS.

● Client-side data delivery is handled quickly by MongoDB with its real-time data readily available compared to Hadoop settings.

● MongoDB gives high importance to low latency while handling real-time data.

Advantages of Hadoop:

● Apache Hadoop’s biggest strength lies in batch processing and can easily handle long-running ETL jobs and analysis.

● Hadoop’s MapReduce is ideal for dealing with a massive amount of data processing compared to MongoDB.

● Unlike MongoDB, Hadoop accepts the data in any format, eliminating additional data transformation involved while processing the vast data.

● Hadoop has a huge framework to support a wide variety of Big Data requirements and can handle large volumes of data with ease.

Limitations of MongoDB:

● Fault tolerance is one of MongoDB’s main limitations as it can sometimes cause data loss and can handle the only moderate size of data efficiently.

● MongoDB has very poor integration and locks constraints issues with the existing RDBMS.

● Another major disadvantage of MongoDB is that it can handle data from either JSON or CSV formats, which may require some additional data transformation.

Limitations of Hadoop:

● Hadoop’s limitation is that it cannot handle real-time data mining very efficiently as it always focusses on high-throughput compared to low-latency.

● Hadoop is a bit costlier compared to MongoDB, as it comprises a bunch of software.

Choosing between a Master’s in MongoDB vs. Hadoop

Very soon, NoSQL databases will replace traditional RDBMS in organizing data in a more flexible and usable way. With the growing implementation of Big Data, there is a massive demand for NoSQL database specialists worldwide. Specialists receiving skills with Hadoop and MongoDB training are fast becoming an industry trend these days.

To Conclude

Job postings have also been steadily increasing over the past two years for MongoDB and Hadoop, corresponding to the growth and popularity of Big Data. So why late? Step to advance your career. Complete an online instructor-led training course at the best-reputed Institution to excel in your Big Data career.